I first started talking to Robert Rice, CEO of Neogence Enterprises, Chairman of the AR Consortium, in 2008. Robert was already actively working on creating the world’s first global augmented reality network. But it took a few months before what Robert had said to me about impending explosion of augmented reality into our lives really sunk in – “this is going to be much bigger than the Web!,†he extolled.

By January, 2009 I was convinced and I posted my first interview with Robert, “Is it OMG Finally for Augmented Reality?..” As I mentioned in the intro, I had recently tried out Wikitude and Nathan Freitas’s grafitti app on the streets of New York City and I was impressed. Now, 7 months later, Augmented Reality has not disappointed and there is an explosion of new applications, and the arrival of some of first commercial and practical toolsets, SDKs, and APIs for aspiring developers.

For more on this see my previous post, Augmented Reality’s Growth is Exponential: Ogmento – “Reality Reinvented,†talking with Ori Inbar, which is an introduction to my series of interviews with the key players in augmented reality and founding members of the ARConsortium – Int13, Metaio, Mobilizy, Neogence Enterprises, Ogmento, SPRXmobile, Tonchidot, and Total Immersion.

As I mentioned before, Maarten Lens-FitzGerald of SPRXmobile told me the other day that my first Interview with Robert Rice, in January of this year, was a key inspiration for SPRXmobile to get started on the development of Layar – a Mobile Augmented Reality Browser. Much more on Layar and Wikitude – world browser in my upcoming interviews with Maarten Lens-FitzGerald and Mark A. M. Kramer, respectively.

Recently, both Layar and Wikitude earned a mention in the white paper by Tim O’Reilly and John Battelle, Web Squared: Web 2.0 Five Years On. Web Squared is essential reading not only because it covers the underlying technological shifts of “Web Meets World,” which augmented reality is a vital part of;Â but, crucially, Web Squared focuses on how there is a new opportunity for us all:

“The new direction for the Web, its collision course with the physical world, opens enormous new possibilities for business, and enormous new possibilities to make a difference on the world’s most pressing problems.”

I am currently working on a post on Green Tech AR which is one of the areas augmented reality can play an important role “in solving the world’s most pressing problems.” Augmented Reality has a lot to offer Green Tech development. As Gavin Starks of AMEE said at Euro Foo in 2006, “climate change would be much easier to solve if you could see CO2.”

But really useful Green Tech AR requires still hard to do markerless object recognition (going beyond feature tracking and modified marker recognition), and a tight alignment of media/graphics with physical objects, in addition to a quite a high level of instrumentation of the physical world. And for Green Tech AR to really shine, we are going to need innovators like Robert Rice who are working on, and solving, multiple really hard problems like:

“privacy, media persistence, spam, creating UI conventions, security, tagging and annotation standards, contextual search, intelligent agents, seamless integration and access of external sensors or data sources, telecom fragmentation, privilege and trust systems, and a variety of others.”

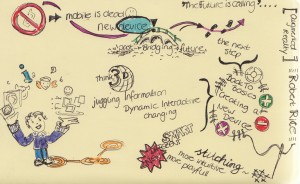

Recently Robert Rice presented at MoMo Amsterdam. Here is a drawing of him in action (picture below from wilgengebroed‘s Flickr Stream).

In his Twitter feed Robert Rice ( @RobertRice ) Robert reminds us: “By the way folks, what you see out there now as “augmented reality” is not what it is going to be in two years.”  Robert plans to show the first public demo of his “platform for platforms” at ISMAR 2009.

Robert is writing up a series of White Papers currently. I got a preview of the first, “The Future of Mobile – Ubiquitous Computing and Augmented Reality.â€Â Robert points out, “AR through the lens of the mobile industry and ubiquitous computing is almost overwhelming compared to AR as marker based marketing campaign.”

I asked Robert, “What are the key take-aways for investors interested in the augmented reality field at the moment:

“First, Mobile AR is going to be bigger than the web. Second, it is going to affect nearly every industry and aspect of life. Third, the emerging sector needs aggressive investment with long term returns. Get rich quick start ups in this space will blow through money and ultimately fail. We need smart VCs to jump in now and do it right. Fourth, AR has the potential to create a few hundred thousand jobs and entirely new professions. You want to kick start the economy or relive the golden days of 1990s innovation? Mobile AR is it.

Don’t be misguided by the gimmicky marketing applications now. Look ahead, and pay attention to what the visionaries are talking about right now. Find the right idea, help build the team, fund them, and then sit back and watch the world change. Also, AR has long term implications for smart cities, green tech, education, entertainment, and global industry. This is serious business, but it has to be done right. I’m more than happy to talk to any venture capitalist, angel investor, or company executive that wants to get a handle on what is out there, what is coming, and what the potential is. Understanding these is the first step to leveraging them for a competitive edge and building a new industry. Lastly, AR is not the same as last decade’s VR.”

Talking with Robert Rice

Picture of Robert Rice at MoMo from Guido van Nispen‘s Flickr Stream

Tish Shute: So perhaps we better start with an update on state of play with Neogence?

Robert Rice: Neogence is doing well actually. We don’t talk much about the fact that we are still a small startup and we face a lot of the usual obstacles related to that and being a small team. Fundraising has been extra difficult, mostly because people are just now beginning to see the potential in AR, but that is still colored by perceptions based on a lot of the gimmicky AR ad campaigns out there. Still, it is better than it was two years ago the idea of an AR startup was a bit of a joke to a lot of VCs we talked to. However, we do have an agreement from a new venture fund in Europe (which we can’t talk about yet) for our first round of funding, but we don’t expect to close that for several months.

If all goes well, we hope to debut our first public demo at ISMAR 2009 in Orlando to select individuals and a few press folks. We might release a few viral videos before then that are conceptual and about what we are building in the long run, but that depends on how things go over the next several weeks.

We are also very active in looking for and building strategic partnerships and relationships with other companies, and this is not restricted to the augmented reality or mobile sector. As I have said before, we are looking at this as a long term business venture and the industry as something that will be bigger than the web itself within ten years. We are doing typical contract work and custom AR solutions to keep the cash flow going and build up the corporate resume a bit. So, if you want something done, and better than the stuff you are seeing now with all of the generic “look at our brand in AR with markers and a webcam” you should definitely give us a call.

Tish Shute: Just to clarify because most of the recent press has been about browser type AR like Wikitude and Layar which are not in the purist sense AR ‘cos they do not have graphics tightly linked to physical world. Neogence, if I am correct, is focused on building a true AR platform in the sense I just described?

Robert Rice: Hrm, I have argued with a few others about the actual definition of AR. Some people prefer a narrow and limiting view (3D overlaid on video), but I think in terms of the market and the end-user, it is better to have a wider definition. In that sense, AR is purely the blend of real and virtual, with or without full 3D overlaid on video. If we go with that, then Wikitude, Layar, Sekai, NRU, and others all fit into the AR definition.

Anyway, you are correct. We are building a true platform for AR, and this is quite different from what others are marketing as AR browser “platforms.â€

There are a few problems with the “AR Browsers†approach that no one seems to be noticing. One is that they are all trying to get people to build new applications for their browsers, when they should be trying to get people to create content that they can share and browse.

Second, someone using Layar is not going to see anything that is designed for Sekai or Wikitude.

Third the experiences are generally for one user. While I love all of these guys and think each of the teams has some real talent on it, the model is flawed until someone using Wikitude can see the same thing that someone using Layar or Sekai camera is seeing (provided they are in the same physical location).

While we are working on our own client side technologies that we hope will be useful and integrated with every mobile device and AR browser out there, our core focus is on connecting everything and everyone together, and facilitating the growth of the industry with the tools to create content, applications, and so forth. We want to solve the really difficult technical problems (some of which most people haven’t even considered yet, because of the perspective they are looking at the potential of AR with), and make it easy for everyone else to do the cool stuff. We want to be the facilitators.

If you really want an idea of where we are going or some of what has inspired us, you have GOT to read Dream Park, Rainbows End, and The Diamond Age. If you have heard me speak anywhere or read my blog, you know that I am continually suggesting these and others.

Anyway, short answer, yes, we are building a true platform for ubiquitous mobile augmented reality, and we are absolutely the first to be doing so. I hope to demo some of this in October at ISMAR, with a full commercial launch next year (10/10/10 at 1010am Hehe, seriously). We will probably launch a website soon for people to start signing up and building a community now (especially if you want in on the beta testing of the whole kibosh).

Tish: So just to clarify, how will Neogence’s approach differ and fit into the growing world of Augmented Reality tools that we have now, e.g., ARTookit, Imagination, Unifeye?

Robert: I guess you could say that we are trying to build the infrastructure for the global augmented reality network. This could be viewed as a service, or even a platform for platforms. If Neogence does its job right, anything you create using ARtoolkit, Unifeye, or Imagination would be applications you could ultimately link to, integrate with, or deploy on or through, what we are building, and not be tied to a specific set of hardware, browser, or walled garden.

Tish: You mention Neogence is going to provide a platform for platforms. Without knowing the details that sounds like a lot of centralization which prompts the inevitable question: “Who owns the data?” Do you think other AR applications or providers would resist a “Platform for Platforms?†I know the potential centralization power of Google Wave has already got people talking about these issues (one of the comments in my recent blog post was about how Google Wave protocol may be interesting for a least some parts of augmented reality communication).

Robert: It really depends on perception and how we end up building it. We aren’t talking about creating a closed system. As far as who owns the data, it depends on what data we are talking about. For the most part, I think that if the end-user creates something, they should own it and have control over it. They should also be able to do what they want with it, independent of everything else.

This is one thing that proponents of the smart cloud and the thin/dumb client don’t like to talk about. It sounds great on paper, but when you start thinking about it, all that does is strip away power from the end user. Case in point…Amazon recently wiped every copy of George Orwell’s 1984 from all Kindle devices. They claimed they didn’t have rights to distribute/publish it and it was available on accident. The scary thing though, is that they literally went into every kindle out there, found copies, and deleted them.

How would you like it if Microsoft suddenly decided to delete every copy of Microsoft Office? Or every file that had a .doc extension? That is a huge violation…we feel like we own what is on our computers. But with the whole cloud thing, your data is at the mercy of whoever is running the cloud servers. No privacy, no ownership, no control. And if the system breaks, all you will have is a pretty dumb device that can’t do much on its own. Now, that isn’t to say that the technical merits and benefits of a cloud model aren’t worth pursuing, they are.

But I think there needs to be some hybrid model. Don’t dumb down my computer or my smart phone, let’s keep pushing how much these devices can do. We should take full advantage of centralized and distributed systems, but in a hybrid mashup sense. That is what we are pursuing with our AR platform, while trying to protect ownership and intellectual property rights of the end user.

Tish: Earlier today I was telling you how impressed I was by Google Wave – it is quite mind blowing to experience massively multiplayer real time interaction on what will be an open internet wide platform – Wave is breaking new ground here and more than one person has mentioned its potential role in AR to me (see the comments to my recent post on Ogmento).

I know you are a strong advocate of this kind of real time shared experience being part of AR. But we are only just beginning to see it emerge via Wave on the existing web – what will it take to have this kind of real time shared experience in AR! We got briefly into the thick client, thin client, cloud versus P2P discussions – what is your approach to delivering a massively shared real time experience that is like Wave not confined to a walled garden?

Robert: I’m not a fan of any of those models as being stand alone or mutually exclusive. Again, the hybrid model with the best of both worlds is key. In the early stages of the emerging industry, you are likely to see some walled gardens (or perhaps a walled garden of walled gardens…).

No one knows how things are going to turn out in the next five to ten years and few people are thinking about it actively. For us though, I favor Alan Kay’s quote (pardon the paraphrasing): “To accurately predict the future, invent itâ€. That’s what we are doing. In the short term, there will be plenty of experimentation in the industry and a lot of model testing.

Tish: Do you think though Wave protocols might be useful as at least part of the picture for AR standards? As you point out open standards and open protocols are going to be vital for shared experiences of AR. Is it important to build off existing protocols to get the ball rolling and what do you see as being the important early protocols for AR?

Robert: I think for now, we will use a lot of existing protocols for communications and whatnot, as well as the usual standards for things like 3D models, animation, and so forth. This is only natural. However, as the industry and technology evolves, we will need entirely new ones. As far as I know there is no existing market standard for anything like the Holographic Doctor from Star Trek Voyager, and that type of thing is definitely in the pipeline for the future (sooner than you would think).

Tish: All the excitement at the arrival of the browser like mobile reality developments has been really great – I feel people are getting a taste for what it means to compute with anyone/anything, anywhere and and anytime.

Wikitude started the ball rolling. And with Wikitude.me it is the first to support user generated content. Now there is Layar, Sekai Camera also. But as you mentioned to me in an earlier chat, with Layar and Wikitude opening up “their are probably half dozen other apps coming out in short order with similar functionality (even the AR twitter thing has some similarities).”

What has been most exciting to you about these developments up to this point? What will these apps/platforms need to do to stand out in a crowd. Up to now, these browser like AR experiences do nothing with close by objects. Do you see “world browsers” with near object recognition coming out in the near future. Could Wikitude do this with an integration of SRengine or Imagination?

Robert: Yes, Wikitude or Layar could do this (integrate with something else for “near” AR) and it would be a step in the right direction. Tagging things in the real world is the basic functionality that will grow from text tags to photos, videos, 3D objects, and all sorts of other types of data and meta data. This gets really fun when that data is generated by the object itself. First is just giving people the ability to tag something and share that tag with their friends, everything else grows from that. This sort of functionality is probably the most exciting in terms of near future advancement.

However, I think the idea of a stand-alone browser platform is a bit awkward…unless you also consider firefox a website browser platform. After all, you can create widgets (applications) for it. Anyway, the point is having access to the same data…if you put three people in a room, one for each browser, they should see and experience the same content, although the interface might be different (based on what browser and of course which hardware they are using). This means there needs to be some communication between whatever servers they are storing their data on (meaning, user tags) and some standard for how those tags are created.

Of course, if all they are doing is grabbing the GPS coordinates of the nearest subway station and telling you how far it is and in what direction, then they should all be able to see the same thing, regardless of the platform. But then, that isn’t really interesting is it? I could get the same info on a laptop with google maps.

This is part of the problem right now though…no one seems to be thinking about the bigger picture much. All of the effort is either on making the next cool ad campaign for a car or a movie, or creating a tool to tell you where the nearest thingamajig is, but in a really cool fashion on a mobile device.

No one is talking much about filtering data, privilege systems, standards, third party tools, interoperability, and so on. There is also little conversation about where hardware is going. Right now everyone is developing software based on what hardware is available. This needs to change where hardware is being developed to take advantage of new software coming out (this happened in the PC industry a while back and growth accelerated dramatically).

These are some of the reasons why I led the effort to start the AR Consortium. We brought CEOs from 8 different AR companies and startups together to start talking about these issues. We are still getting organized and have plans to expand the membership to other companies, but we want to do this right and we aren’t rushing things. The important thing is that we have started and there is at least a line of communication open now, where there wasn’t before.

I would expect to see the early movers expanding what they offer very soon, and they will probably lead the way in the short term. Definitely keep an eye on the companies involved in the AR Consortium. There are lots of very smart and motivated people there, and they are far ahead of all the experimental dabbling in AR we are beginning to see on youtube, twitter, and elsewhere.

Tish: When we had a discussion about what were the basics for an AR platform and an AR browser earlier, you talked about the difference between tools, a platform, and a AR browser – like Wikitude and Layar which should be about features/functionality e.g. to create treasure hunts AR geocaching, invisible AR yellow sticky notes you can leave at restaurants you don’t like, etc. Also you noted it should let you explore (browse) multiple formats, and open content content for AR – any data, information, or media that is linked to something in the real world and the visualization/interaction with the same.

Wikitude is a stepping stone to a true browser by your definition. But are we also seeing what you would define as an AR platform emerging – Unifeye, Wikitude (you can recap your definition if you like too)?

I think Wikitude hopes to provide the lego blocks for augmented reality readers, browsers, applications, tools, and platforms?

Robert: I expect some segmentation among the various AR companies that are out now, as they find their individual strengths and focus on them. Some will emphasize the client software (the browser), others will develop robust tools for creating content, SDKs/APIs will advance and facilitate rapid development of applications, etc. Neogence is ultimately working on the glue in the middle that ties everything together, makes it massively multiuser, persistent, and ubiquitous. Things like Unity3D have the potential to fill a need in the middleware space.

Tish: I know Blair McIntyre (see my interview with Blair here) and others are using Unity3D as an AR client, Could Unity3D become increasingly important?

Robert: It has the potential to become a favored middleware for providing the rendering layer. It already works nicely in regular browsers, and on several mobile platforms. Why code all the graphics rendering stuff from scratch when you can just license something and extend its features with AR functionality?

Tish: Now to ask your own question back to you! There seems to be a lot of reason to think that, eventually, there will be the kind of access to the iphone video API that augmented reality really requires and by that I mean more than we will get with OS 3.1 which is rumored to deliver only about half of what we really need for AR on the iphone – “not truly useful when you want to align video. with graphics.” So:

“The iphone…future or failure? Seemingly anti-developer stance regarding augmented reality, and only a sliver of the global market share. Are we letting the short term glitz of Apple and the iPhone fad pull us in the wrong direction? Shouldnt we be focusing on symbian devices that have the lion’s share of the market? or should we be looking more at either other OSs (winmobile, android) or not at all and trying to create a new platform that is more MID and less smart phone with a hardware partner?”

Robert: Apple and the iphone are a bit problematic right now. There is no way I can go to a venture capitalist (at least in North America) and say hey we are building awesome AR applications for winmobile or symbian…they would either laugh or they simply wouldn’t get it. There is this false perception that the iphone is the ultimate mobile device, it is the sexiest, and the only thing that people want. Everyone wants a demo on the iphone, the media is mostly interested in iphone developments, and the apple fanatic market could give a fig about other devices. Other devices may have a larger market share or even better hardware, but we have to focus on the iphone right now at least in the demo stage to get any market attention and traction worth the time and effort.

In the future though, unless Apple changes its stance with their SDK and APIs, and starts adding hardware that is key for mobile AR (beyond what is there now), the market will move on without them. This is a really easy decision to make given Apple’s draconian policies and the fact that their percentage of the global market is miniscule. The smart companies are looking at the whole picture and not putting all of their eggs in the Apple basket.

Of course, once the wearable displays are commercially viable everything changes. Wearable computers with small screens or even no screens are going to be what everyone wants. The interface will go from handheld touch screens to virtual holographic interfaces that you interact with using your bare hands.

So for now, (the immediate short term), its all about the iphone. Taking mobile ubiquitous AR to the global market and building for the future will be based on something else. Hardware risks becoming a commodity or a closed platform. Do you really want to buy the Apple iGlasses and only see AR content that is compatible, where your best friend has a pair of WinGlasses and sees something entirely different? No. The hardware, and the client software (what people are calling the ar browser now) will become common and it won’t matter what brand you use, they will all be accessing the same content.

But at least for the forseeable future, we are building software for specific hardware, and the sexiest mobile on the block is the iphone. The second someone comes out with something much better and the paradigm shifts (software driving hardware instead of vice versa) everything changes.

Tish: How is the quest for sexy AR eyewear going. I know we were checking out the Japanese eyewear with Adam Johnson from Genkii just now. For the Neogence project – as you are going for a fully developed model of AR doesn’t this necessitate going beyond the iphone and getting the hardware companies moving on the eyewear?

Robert: The guys making wearable displays really need to get off the pot and stop paying lip service to mobile AR. If they don’t do something quick, I, and others, are going to be scouring the planet looking for someone capable of building the lightweight stylish wearable displays with transparent lenses we are begging for. We aren’t going to be waiting around for hardware anymore. The AR Pandora’s box has been opened. I should note that many of us (AR Consortium members) have had less than pleasant experiences or communications with the half dozen companies or so that are making wearable displays. Either their visual design is terrible, the materials feel flimsy, the field of view is limited, or the companies are preoccupied with other business and government contracts. Any attention to the growing AR market is an afterthought and in a few cases condescending. AR is going to be a billion dollar industry in a very short time, and these guys are just leaving money on the table. If they were smart, they would be begging the CEOs from the AR Consortium to fly out to their offices and collaborate on building a pair of wicked sick glasses. The smart phone manufacturers should be doing the same thing, but I have to say that they at least seem to have some ambition and zeal to create better devices, so I can’t really complain too much there.

Anyway, to answer the rest of your question, we have to assume that the hardware guys, especially regarding the eyewear, is going to take a long time to develop and release the things we need for the ultimate AR experience. So, our goal is to start building things now for what is available. That means scaling things down and handicapping what AR can do, so it works on the “sexy” iphone. The important thing though is to start creating applications -now- so when the glasses are commercially available, there will be a wealth of content for people to access and use on day one.

As long as Apple isn’t playing nice, it is going to hurt everyone. Is it any surprise that they shut down Google Voice? There is a huge opportunity for someone to step up and leapfrog the rest of the industry. Give us the hardware and we will create amazing software for it. Don’t compete with the iphone, surpass it.

Tish: What is the state of play of current AR technology and toolkits?

Robert: The current crop of AR technology and toolkits is absolutely critical for this stage of the industry, and everyone should be leveraging it as much as possible. I talk down marker and image based tracking a lot, but I also like to point out that it is the necessary baseline that the industry is going to be built on. The problem is that there is only so much you can do with marker driven apps, and as creative people and marketing types start conceptualizing about all sorts of cool stuff for the future, they risk setting the expectations too high. It is one thing to show someone the future, it is another to say this is the future and its happening right now. This is why I cringe everytime I see a conceptual video presented as “our product DOES this” instead of “our product WILL DO this.” Something that simple can still cause the butterfly effect of raising expectations too high and contribute to overhyping.

Tish: One of the things that seems very exciting about the new Ogmento partnership is that experienced content producers Brad Foxhoven and Brian Selzer from Blockade are now taking a leading role in AR. What are the most exciting directions for content that you see emerging for AR in the next 12 months?

Robert: Virtual (well, augmented) pets, and multiuser mobile AR games (2-4 people) are probably going to lead in the next 12 months for content. Easy, accessible, engaging.

Tish: And are you at Neogence also involved in content partnerships?

Robert: Yes, we are in the process of finalizing some content partnerships with an eye for long term relationships. We are specifically looking for partners that want to find substantive ways to leverage AR technology, and not use it as a superficial gimmick or attraction that wears off after five minutes. I’m still cringing over the Proctor & Gamble Always campaign with AR.

Tish: So back to your observation about some of the tricky problems re creating a true global massively multiuser, ubiquitous, mobile AR platform – what are some of the main obstacles to this mission in our view? (aside from getting investment!)

Robert: Trying to explain it to people. The technical problems we can handle or have already solved. But trying to communicate what exactly we are doing is still tough. Not because it is overly complicated, but rather because it is so new and different. People are having a hard time grasping augmented reality beyond marker/webcam.

Tish: Which AR tools are most important right now?

Robert: Content is critical right now to show what the technology is capable of and to continue building the presence of augmented reality in the public mind the big benefit to integrated / unified platforms now is speed of development for content. I think that the flash artoolkit = papervision is rocking the planet right now. It is accessible, easy to learn, and lets people create something very quickly. More tools and middleware are coming out and this increases options for designers and developers.

Tish: What are your favorite papervision apps?

Robert: Hrm, I don’t have a favorite papervision app just yet, although I think the tech is solid. I expect to see a lot of stuff built on that platform in the near future. Especially as more ad agencies get on the bandwagon and start telling their IT guys to learn how to program flash so they can make something. Have you seen www.ronaldchevalier.com Not so much for the actual AR stuff, but because the whole thing is just brilliant. Its exactly like some cult figure spiritual guru would do with AR. I wish I had thought of it first actually. This is probably one of the best -seamless- implementations of AR in marketing where it fits…it isn’t just jammed in there for the sake of saying they used AR.

Tish: Do you think Apple is going open the iphone to the full potential of augmented reality anytime soon – a lot of expectations have been raised?

Robert: Apple is like that guy has a party at his house and owns this really awesome state of the art home theater in his basement, but makes everyone watch a movie in the living room on a regular TV with a VCR.

They need to get over themselves and quit being a wet blanket. Otherwise, we are taking the beer and pizza we brought, and going to someone else’s house. Sorry, the Apple thing is a bit of a sore point with me.

Tish: But will people leave all that candy and soda at the appstore?

Robert: I tell you what though, there is an opportunity for certain mobile phone manufacturers to give me a call and start talking to Neogence and the other members of the Consortium. We have some ideas and specs that could have a radical impact on the mobile market and stuff the IPhone in a box. Hint hint.

Tish: So what is your vision for the ARconsortium. I know it kicked off with a letter to Apple about the video API. What is the next step? There was a lot of hope that this year would be big for MIDs but this really hasn’t happened yet – do you think there is hope for a MID take off despite the lousy economy?)

Robert: MIDs? No, not yet. smart phones are too lucrative and too hot. It isn’t time yet for the MID to go mainstream. For that to happen, there needs to be a driving need (cough ubiquitous AR cough)

The AR consortium is mostly an informal affiliation. I expect that representatives from each member will probably meet at every significant conference to catch up over drinks. We are also going to be planning for our own members conference at least once a year. That will happen after we expand the membership though.

The main idea behind the consortium though was to open up a channel of communication between the CEOs so we could work together on standards, solving problems, collaborating, forming some partnerships, and using the collective to bang on the doors of companies like Apple and others. There is power in a group.

Tish: You mentioned there is a whole long conversation we can have about getting the eyewear. As you point out true AR eyewear changes everything. Can give a little road map of where this has to go?

Robert: There are essentially four or five main approaches, depending on whether or not you make the lenses special or if they are just plain. You would normally want them to be plain so people with prescription lenses wouldn’t have problems and would have the option to switch them out. Some types use a more prismatic approach for top down projection, or a corner piece mounts lasers and bounces them off the lens into the eye. Another approach is embedding OLEDs or something else into the lenses themselves.

I really like the Lumus approach, but their product design isn’t quite there yet. If the wearables don’t look cool, people won’t use them. To be honest, if I had the money, I’d probably ask the Art Lebedev guys to design them based on someone else’s optical engineering. They designed the optimus maximus old keyboard…Â Â brilliant industrial designers, loaded with engineers too. If these guys couldn’t build the glasses and make them look damn bad ass, I’d be shocked. Heck, I bet they could build the next gen MID while they were at it.

Tish: Getting the hardware innovation and software innovation feeding into each other would be really great.

Robert: Absolutely.

Tish: That would push the eyewear forward too wouldn’t it?

Robert: All it takes is one, and then the competitive landscape would fire right up.

Tish: What applications would the accurate gps enable?

Robert: Everything. for example, you know exactly where the phone is and where it is facing, that means you can put it on a table and hit a button, then move it somewhere else and do the same thing in a few minutes, you have a nearly accurate “mental” model of the whole place now you go back and start dropping virtual flower pots everywhere.

This is one area where I think the smart phone guys are missing the boat and taking the cheap route. It is possible to have very accurate GPS (down to a six inch area) with better chips and firmware, but it is cheaper to stick in old tech. Most apps today dont need that hyper accuracy, so they aren’t bothering. Mobile AR though, thats a different story.

With that level of accuracy, you would know exactly where the mobile device is, so all you would need to know is the direction it is facing (orientation), and you could solve one of the problems with registering exactly where 3D objects and augmented media is (it is more complicated than I am describing it, but we don’t need to get into that much detail here). You wouldn’t need markers anymore.

Tish: Isn’t Wikitude doing this with Wikitude.me their tagging app.?

Robert: Not really. That type of approach is on a very large scale using the accelerometers compass and GPS to determine where you are and what is in the distance. They (and others like Layar) don’t handle “near” AR. They effectively poll your GPS and then check a database to see what is nearby and what degree/distance it is and then they draw a representation on the screen. They don’t even need a mobile device’s camera at all.

Even if they did things up close, its still based on finding landmarks or on things that are broadcasting their location. For example, if they were standing near me, they might get “robert, 37 degrees, 15 meters away” but they wouldn’t be tracking me exactly as I walk around or have the ability to overlay graphics on ME.

Tish: I retweeted your #ar marketing using ARToolkit + flash (markers/webcams) = Photoshop pagecurl <six months. Bad design kills innovation. I know you like Dr Chevalier though! What are some of the other AR marketing projects that you like. What would you like to see in terms of innovation in the next 6 months?

Robert: The marker/webcam approach is already becoming overused and cliche (tremendously fast). Older readers will remember the ubiquitous photoshop page curl that adorned nearly every website and graphic on the internet back in the day. It was horrible. Yes, the Dr. Chevalier stuff cracks me up.

I want to see some big companies or ad agencies really try to do something different with AR, preferably mobile. Take some risks, do something different. Don’t follow the crowd. Innovation? I want to see some wearable displays with transparent lenses, I want a mobile device specifically designed for ubiquitous AR, I want to see some experimenting with AR in the green tech sector, and I’d like to see someone get that GiFi wireless technology from that researcher in Australia and jam it into a smart mobile. I would also like my flying car and lunar vacation now, thank you. It is almost 2010 and no one has found that black obelisk yet.

Tish: So a few closing thoughts! What do you see as the next big thing? Hopes for the ar consortium? Biggest bstacle for commercial AR? And what is the coolest thing you have seen this year?!

Robert: The next big thing is what I’m working on hahaha. I hope the AR Consortium will grow and be the active catalyst in making AR mainstream, practical, and world changing.

The biggest obstacle is making sure that the right funding finds the right developers to develop the right technology and create kick ass applications.

The coolest thing I’ve seen this year would probably be the facade projection stuff (see below): Now, imagine that, but without the projector. Thats part of what I envision for AR in the future.

August 3rd, 2009 at 7:46 pm

Tish, when you ask Robert “…what is your approach to delivering a massively shared real time experience that is like Wave not confined to a walled garden?” that’s an extremely relevant question + one that needs to be addressed while considering the entirety of the Reality-Virtual Continuum. I’ve recently finished a series of articles addressing this: the framework I’ve developed is termed “Social Tesseracting”:

“In _Social Tesseracting_: Part 1, we learnt that:

1. Dimensionality defines working concepts of reality.

2. Theoretically, dimensionality can also expand to define a spectrum of nascent social actions.

3. These particular social actions encompass communication trends defined by synthetic interactions.

4. Synthetic interactions create social froth that can be produced geophysically or geolocatively. Both connection types depend on relevant electronic gesturing.

5. This mix of synthetic interactions and electronic gesturing provokes a descriptive framework of this aggregated sodality. This framework is termed Social Tesseracting.

6. In order to adequately formulate Social Tesseracting, contemporary theorists need to extend “valid†reality definitions based currently on the endpoint of the geophysical.”

The series examines the markers [with no AR-pun intended;)] associated with this system, including:

“e) Information Deformation: akin to process centering, this Social Tesseraction involves a shift in the very definition of information: ‘Information may be defined as the characteristics of the output of a process, these being informative about the process and the input. This discipline independent definition may be applied to all domains, from physics to epistemology.’

These deformed systems of data are constantly in flux and available for perpetual revision. Examples include:

* cloud-based applications

* constantly transliterated google waves.

Users are able to simultaneously modify, update and adapt their input in real time. This type of liminal practice results in a deformation of current information architectures. Although traditional information construction may be flexible over time, it still demands unitary data snapshots for knowledge formation. Deforming such data in real time acts to fundamentally alter meaning production. Socially structured input is the keystone of such a dynamic, perpetually fluctuating system.

Here the notion of Social Froth takes on a new level of importance: information becomes a constantly shifting construct with variable endpoints. Rewiring information in such a way radically changes its cohesive nature. This in turn effects:

* authorship

* politics

* communication and media

* education

* book publishing and academia [think: the perpetuation of potentially obsolete content systems]

* the scientific method

* disciplines dependent on referential instruction [think: History or Commerce].”

…and…

“g) Decline of Silo Ghettos: as information deformation impacts knowledge formation, there’s an increasing need to provide social tesseractors with comprehensive dimensional engagement. This type of borderless interaction deforms monostreams into cross-channelled productions. Social tesseracts assist in addressing the somewhat restrictive walled garden approach to software and platform production [think: the frustration levels encountered whilst experiencing the locked door syndrome].

Google Wave is one system that removes such constraints and allows users to input directly into previously distinct arenas. Other instances of interoperable systems that require the reorientation of Information Silos:

* augmented applications that encourage a pairing of geolocative and geophysical needs.

* bridging software that links previously disparate platforms together [think: IRC-to-Second Life Chat Bridge]“.

The series can be accessed here:

http://arsvirtuafoundation.org/research/2009/03/01/_social-tesseracting_-part-1/

Regards,

@Netwurker [Mez Breeze]

August 4th, 2009 at 6:49 am

Another wonderfull interview, a great read

Random comments;

““First, Mobile AR is going to be bigger than the web. Second, it is going to affect nearly every industry and aspect of life. Third, the emerging sector needs aggressive investment with long term returns. Get rich quick start ups in this space will blow through money and ultimately fail. We need smart VCs to jump in now and do it right. Fourth, AR has the potential to create a few hundred thousand jobs and entirely new professions. You want to kick start the economy or relive the golden days of 1990s innovation? Mobile AR is it.”

This sort of quote I find massively inspireing.

And its all true. Even just a logical extrapolation of technology…smaller..cheaper..faster….seems to inevitably lead

to world-changing potential use’s for AR.

And yes, huge new markets will be created, I dont think we can fully anticipate what yet.

Maybe there will be a need for people to constantly be modeling, fixing errors, and generaly making sure the real world and the virtual are in sycn. Maybe there will be massive more opertunitys for individuals to make money designing stuff…real world objects effectively being reskinable, and without the need for controlling distrubution networks, this gives “the little guy” more opertunitys.

Of course though, I also see massive job-lose’s too. AR…the ideal, perfect AR…would also make a lot of industarys redundant, or at least hugely reduce demand.

But I dont see this as a bad thing. Reduceing humanitys dependancy on physical goods is not only better for the enviroment, but free’s people up to have more interesting jobs. The energy and effort it takes to make a virtual product is vastely less then a physical one, so they can be vastely cheaper.

AR Will be hugely disruptive to encomys, but overall I think the effects will be positive, as it means all of us can do more, with a lot less. There might well be a deficult phase though, for those that dont see the transition comming.

“Third the experiences are generally for one user. While I love all of these guys and think each of the teams has some real talent on it, the model is flawed until someone using Wikitude can see the same thing that someone using Layar or Sekai camera is seeing (provided they are in the same physical location).”

That pretty much sums up my current thinking.

I feel a real buzz from this as Robert seems to really “get it”, and its exciting to know these problems are being worked on.

I mean, it would be crazy to need to switch browsers to look at different websites.

“But I think there needs to be some hybrid model. Don’t dumb down my computer or my smart phone, let’s keep pushing how much these devices can do. We should take full advantage of centralized and distributed systems, but in a hybrid mashup sense. That is what we are pursuing with our AR platform, while trying to protect ownership and intellectual property rights of the end user.”

Thats also very positive.

I would point out, however, that Google Wave will almost certainly be Gears-complient.

(which to me is a fairly nice solution to the “what if I lose faith in whos hosting).

Wave can also be hosted by anything, as its an open protocal. (so if you really want, you can self-host)

But yes, existing protocals to start with, moving onto more custom stuff later.

I would hope for something like the html DOM system, manipulatable with a javascript or javascript like possibilitys.

So we would progress from loading static meshs, to animating meshs, to -dynamicaly- animated meshs based on real time user data.

Think the equilivent of the Canvas element in html5.

Will take awhile to get there though.

I also worry slightly about how easy it will be to have multiple streams of data visible at once.

This sounds is easier enough with static objects, but to run say, an “AR Game”, at the same time as having a chat window, and maybe a map, could get tricky.

The game makers, will, presumbly, want to use the hardware “to the max” and probably not want to be confined to restrictive mark-up like standards. But, on the other hand, their graphics have to be blended somehow with graphics from other sources. A simple overlay isnt enough, as some objects should be obscuring others and visa-versa.

How does their code co-operate with other peoples to do this?

In the long run, this might not end up so much a problem for an AR Browser/platform, so much as one for graphic driver creators.

“Robert: Trying to explain it to people. The technical problems we can handle or have already solved. But trying to communicate what exactly we are doing is still tough. Not because it is overly complicated, but rather because it is so new and different. People are having a hard time grasping augmented reality beyond marker/webcam.”

Someone needs to bring Denno Coil to the west

That show, incidently, was made by an educational channel in Japan.

While being pure entertainment, it also preped its viewers for AR. Everyone that watched that got the concept

instantly.

We need a mainstream show here to do the same. Not just the odd contact-lens in one eppisode, or Terminator-style vission…but a mainstream tv show using AR as a constant.

As far as technology goes, its about as near-future as you can get. But unlike other tech, its still hardly touched by scifi shows.

–

“his is one area where I think the smart phone guys are missing the boat and taking the cheap route. It is possible to have very accurate GPS (down to a six inch area) with better chips and firmware, but it is cheaper to stick in old tech. Most apps today dont need that hyper accuracy, so they aren’t bothering. Mobile AR though, thats a different story.”

This annoys me too.

I only hope that galileos satalite array will help with this.

More sources of positioning singles, should make it cheaper to be more accurate.

“Halting State” depicts galileos as being the base’s for a persistant AR worlds.

==

Btw, is it me or does Dr. Chevalier seem like the brother of Garath Marengi? (Dark Place).

August 4th, 2009 at 5:26 pm

Thank you Mez for this awesome brainsplosion! And thank you Dark Flame for all these thoughtful and excellent points (your comments, Thomas, have been inspiring me for a while as you know). I just drove from Vermont to Maine through many Moose crossings so I have not had time to respond yet but this is exciting stuff.

August 5th, 2009 at 6:07 am

I’m just an enthusiastic fan

Now I’ll go and try to work out what on earth mez is trying to say ^_-

August 17th, 2009 at 6:14 pm

Thanks, Tish. This is a great resource!